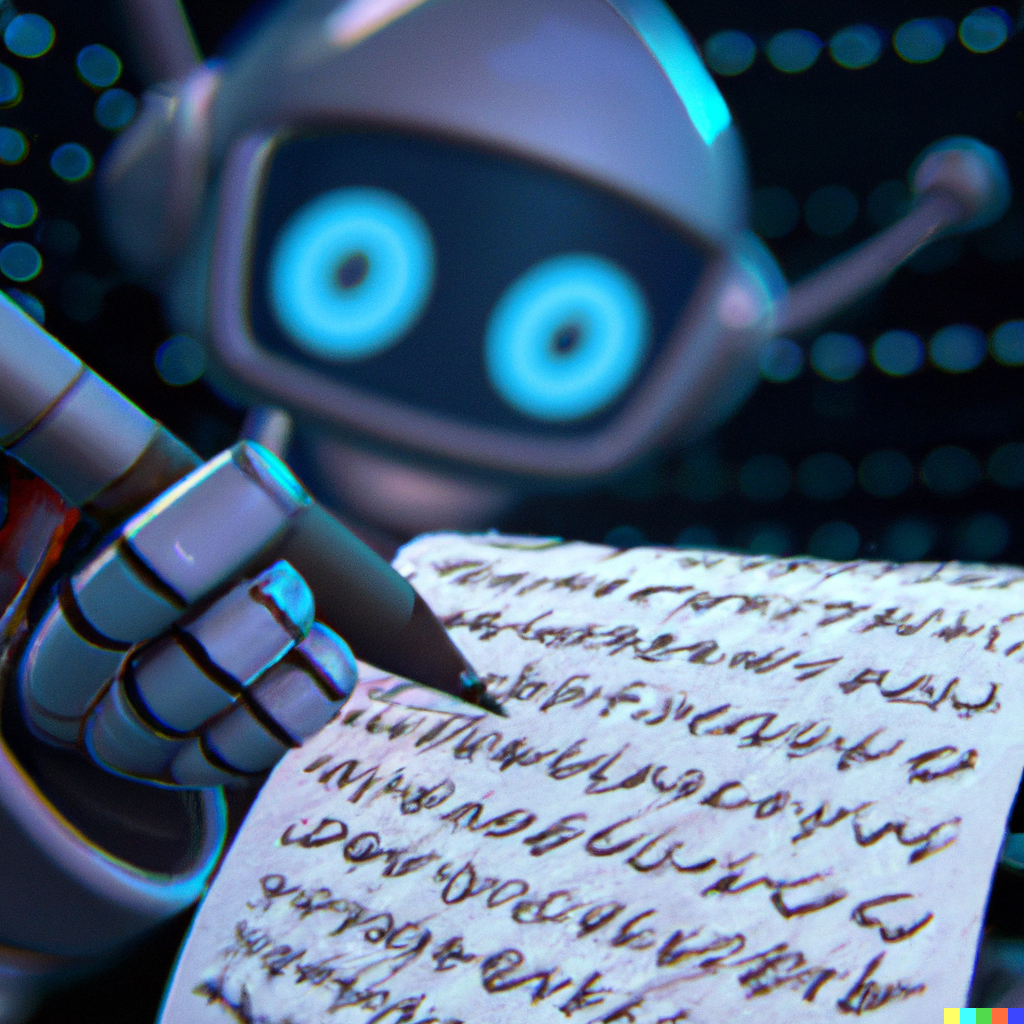

How might we teach, model, and develop AI literacy in all learners, so that they do not mistake machinic outputs for wonders? How might we leverage generative AI's wondrous powers to augment student learning and performance? Are these two questions in tension with each other?

A Call for AI Literacy

"A New York federal judge on Thursday sanctioned lawyers who submitted a legal brief written by the artificial intelligence tool ChatGPT, which included citations of non-existent court opinions and fake quotes." So begins a CNBC article that was published in June of 2023. I remember hearing about the story when listening to NPR in my car last summer.

It reminded me of an On The Media episode, "It's a Machine's World," that aired back in January not long after OpenAI made its latest version of ChatGPT freely available to the public. Brooke Gladstone and her guest emphasize the point that programs like ChatGPT are “people pleasing applications,” meaning it is “good for people to keep in mind that these models are, above all, designed to sound plausible.” Large Language Models, like ChatGPT, are less concerned with learning the "truth" and therefore conveying it, and are more focused on delivering what the program predicts the user wants. This brings up deep issues of trustworthiness, especially in the context of schools, thereby reminding us of the importance of human judgment as well as the urgency to develop in students and faculty alike a robust form of AI literacy.

Otherwise, we risk mistaking mere signs (or outputs) for actual wonders, which can have real societal consequences. Just ask those lawyers from NY.

Plato Warned Us

Plato warned us about this. More specifically, he warned us of the power of technology and its ability to spread misinformation and misunderstanding. This was another one of his (or Socrates') arguments for why writing, as a technology, could be harmful (go here to read about Plato's other argument):

Anyone who leaves behind him a written manual, and likewise, anyone who takes it over from him, on the supposition that such writing will provide something reliable and permanent, must be exceedingly simple-minded...

Ouch! We definitely have been living through a moment of history where we - the exceedingly simple-minded - have been misled and manipulated by the excess of misinformation that makes up the majority eco-system of our social media platforms and websites. In other words, Plato warned us about Elon.

He continues his argument stating:

And once a thing is put into writing, the composition, whatever it may be, drifts all over the place, getting into the hands not only of those who understand it, but equally those who have no business with it. (521)

As an avid reader and lover of books, it's hard to concede that Plato was right about something here. Signs are powerful. Written words do things to the world and to those of us who live here. I think of pernicious texts like Protocols of the Elders of Zion and the destruction that that "fake text" caused. Plato had reason to express concern.

Trustworthiness, Explainability, Bias & Fair Use

Channeling Plato, we must acknowledge that AI is "getting into [all of our] hands," and it is powerful and often wondrous. Pushing back on Plato, it's also an exciting and positive game changer, no doubt, and it will transform schools, augment human performance, and change the way we teach and assess our students. And all of these are good things.

But how many of us are "those who understand it," to quote Plato? How many of us have the AI literacy necessary to avoid mistakes like those committed by the lawyers in NY?

Beyond the issue of trustworthiness, there are other challenges that demand us to develop AI literacy.

Take explainability, for instance. There is a trade off between high-performing, unpredictable Deep Neural Networks and the ability to explain their complex statistical calculations and outputs. By relying on the probability of what will likely take place, they are rarely, but inevitably, inaccurate (trustworthiness) and thereby pose an “agency risk” because their inexplicable behavior “make it even harder to attribute responsibility to a particular agent” in cases where they are mistaken (Babic, Chen, Evgeniou, & Fayard, 2021). This is one of the greatest dilemmas in the debates about responsible AI because “the abilities that [machine learning] systems are being pressed to explain may be powerful exactly because they do not arise from the use of the very concepts in terms of which their users now want their actions accounted” (Smith 66, 2019). As one expert put it, “computer scientists don’t actually know what’s going on under the hood of their systems,” and that poses challenges for us as responsible educators (Hosanager 105, 2019).

Perhaps the most alarming issue related to artificial intelligence is the replication and amplification of bias through powerful programs whose coding and data were created, collected, and curated by flawed human beings. This is not a technical problem; it’s a human one. We cannot “assume that data itself is neutral and objective… [W]hat is vital to remember is that there’s no such thing as ‘raw data.’ Whatever data we measure and retain with our sensors, as with our bodily senses, is invariably a selection from the far broader array available to us” (Greenfield 210, 2018). This is true for coding as well, and this point matters for people-centered organizations like schools. How much biased coding (writing) and data are fueling our generative AI systems and thereby amplifying our biases such that, in Plato's words, it "drifts all over the place"? This is the newest challenge of the (dis)information age, and how are we preparing students for it?

There's also the question of "fair use" versus copyrighted material that generative AI uses to bolster its wondrous power. Take image generators like DALL-E, Midjourney, and Stable Diffusion, for instance; Kate Koidan in her article on Medium explains how DALL-E claims to have been trained on licensed content as well as on publicly available material. Midjourney and Stable Diffusion, however, claim they simply performed "a big scrape of the internet" without seeking permission of the artists and creators of that original content. It's no surprise that such irresponsible use of data has now led to lawsuits. So, how are we preparing students to be informed, ethical decision makers in this kind of environment?

The Future is Here

The future is here. And it involves a new kind of technological platform, what I'm calling "Artificial Intelligence Management Systems." In addition to a traditional LMS, schools will need to consider whether they need to additionally adopt an AIMS, an exciting example being the startup, FlintK12. If you haven't checked out their platform, you should.

But don't forget that before we aim to adopt the right platform for managing student use of AI, we have to develop in collaboration with our students an AI literacy, an understanding of AI, that informs how we respond to the signs and outputs of these wondrous machines. Otherwise we will be doing a lot of unlearning in the near future, and maybe even confirming Plato's own bias, that we should "have no business with it" in first place.

Let's make sure that does not happen.

Fortunately, I do believe we can do both - namely, teach AI literacy and leverage the technology's power to augment human (and student) performance. I'm curious how others are doing it though, because this is uncharted, wondrous territory, and there are no obvious signs to lead the way.

Sources:

- Brooke Gladstone. “It’s a Machine’s World.” On The Media Podcast. WNYC Studios, National Public Radio, 13 January 2023. Accessed 24 January 2023. https://www.wnycstudios.org/podcasts/otm/episodes/on-the-media-

its-a-machines-world. - Plato. Phaedrus from The Collected Dialogues of Plato. Trans. R. Hackworth (1952). Eds. Edith Hamilton and Huntington Cairns. Princeton University Press, 2009.

- Boris Babic, Daniel L. Chen, Theodoros Evgeniou, and Anne-Laure Fayard. “A Better Way to Onboard AI: Understand It As a Tool to Assist Rather Than Replace People.” Harvard Business Review. Harvard Business Review Press, Winter 2021.

- Brian Cantwell Smith. The Power of Artificial Intelligence: Reckoning and Judgment. The MIT Press, 2019.

- Kartik Hosanager. A Human’s Guide to Machine Intelligence: How Algorithms Are Shaping Our Lives and How We Can Stay in Control. Penguin Books, 2019.

- Adam Greenfield. Radical Technologies: The Design of Everyday Life. Verso Press, 2018.

- Kate Koidan. "Legal & ethical aspects of using DALL-E, Midjourney, & Stable Diffusion." Medium, 29 March 2023.

Comments